|

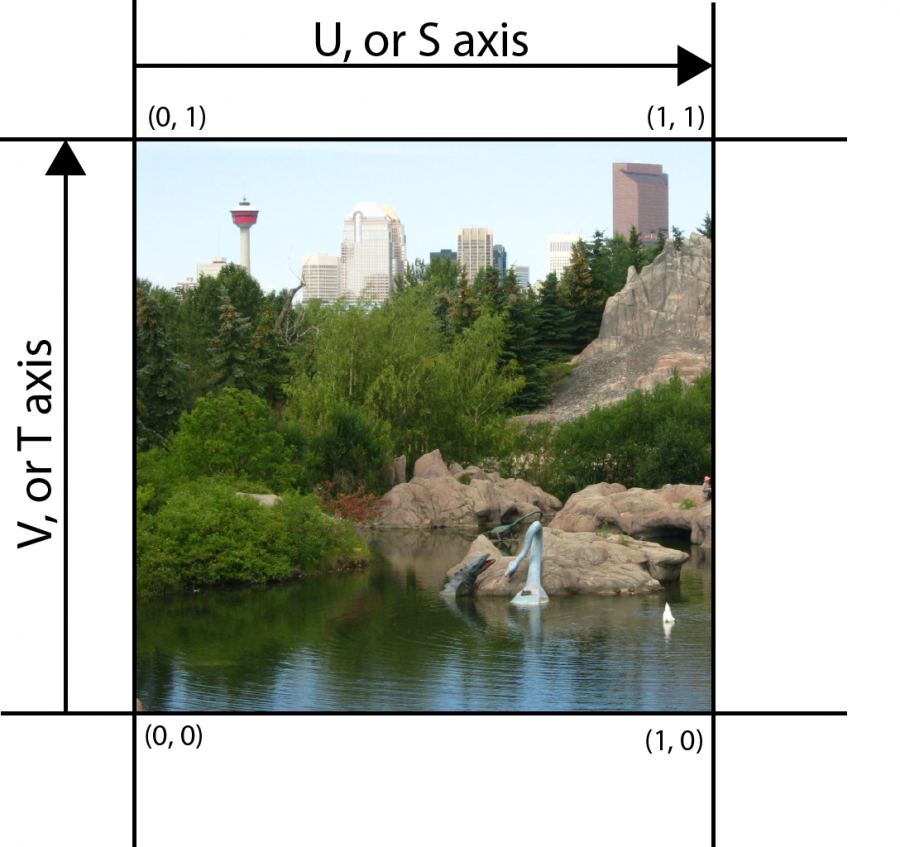

Polygons with vertices outside of the viewing volume may be clipped to fit within the volume. All coordinates may then be divided by the w. In the context of OpenGL or Vulkan, the result of executing vertex processing shaders is considered to be in clip coordinates. Objects' coordinates are transformed via a projection transformation into clip coordinates, at which point it may be efficiently determined on an object-by-object basis which portions of the objects will be visible to the user. In OpenGL, clip coordinates are positioned in the pipeline just after view coordinates and just before normalized device coordinates (NDC). The clip coordinate system is a homogeneous coordinate system in the graphics pipeline that is used for clipping. What is the result ? Give 3 different ways to fix this.Coordinate system used in computer graphics Try not to generate mipmaps in The Compressonator.To convert to OpenGL coordinates we do the following: winY (float)viewport3 - winY // Subtract The Current Mouse Y Coordinate From The Screen Height. Do they give different result ? Different compression ratios ? Now Windows coordinates start with (0, 0) being at the top left whereas OpenGL coords start at the lower left. Experiment with the various DDS formats.Change the code at the appropriate place to display the cube correctly. The DDS loader is implemented in the source code, but not the texture coordinate modification.In general, you should only use compressed textures, since they are smaller to store, almost instantaneous to load, and faster to use the main drawback it that you have to convert your images through The Compressonator (or any similar tool) Exercices You just learnt to create, load and use textures in OpenGL. You can do this whenever you want : in your export script, in your loader, in your shader… Conclusion glScissor () defines a screen space rectangle beyond which nothing is drawn (if the scissor test is enabled). So if you use compressed textures, you’ll have to use ( coord.u, 1.0-coord.v) to fetch the correct texel. So, you use glViewport () to determine the location and size of the screen space viewport region, but the rasterizer can still occasionally render pixels outside that region. Static const GLfloat g_uv_buffer_data = free ( buffer ) return textureID Inversing the UVsĭXT compression comes from the DirectX world, where the V texture coordinate is inversed compared to OpenGL. You'll learn shortly how to do this yourself. Here is the declaration of the loading function :

So we’ll write a BMP file loader from scratch, so that you know how it works, and never use it again. But it’s very simple and can help you understand how things work under the hood. Knowing the BMP file format is not crucial : plenty of libraries can load BMP files for you. Notice how the texture is distorted on the triangle. These coordinates are used to access the texture, in the following way : Name glFragCoord contains the window-relative coordinates of the current fragment Declaration in vec4 glFragCoord Description Available only in the fragment language, glFragCoord is an input variable that contains the window relative coordinate (x, y, z, 1/w) values for the fragment.

This is done with UV coordinates.Įach vertex can have, on top of its position, a couple of floats, U and V. When texturing a mesh, you need a way to tell to OpenGL which part of the image has to be used for each triangle. How to load texture more robustly with GLFW.What is filtering and mipmapping, and how to use them.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed